Here are the slides for the talk I just gave at WCNYC 2016, titled “Building a Better WordPress through Software Archaeology”.

WordCamp Chicago 2016 slides

I just finished giving a talk at WordCamp Chicago titled “Backward Compatibility as a Design Principle”, in which I discussed WordPress’s approach to backward compatibility, how it’s evolved over the years, and its costs and benefits when compared to the alternatives. I’m not sure that the slides are very helpful in isolation, but someone asked me to post them, and I am not one to disappoint my Adoring Fans. Embedded below.

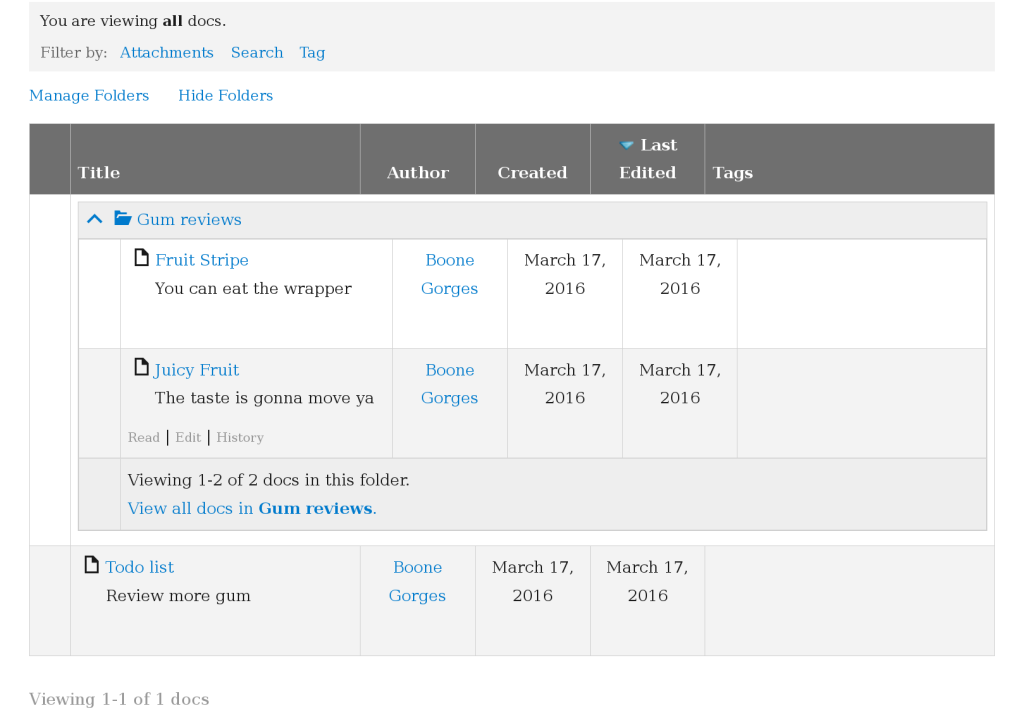

BuddyPress Docs 1.9.0 and Folder support

BuddyPress Docs is one of my more popular WordPress plugins. For years, one of the most popular feature requests has been the ability to sort Docs into folders. Docs 1.9.0, released earlier this week, finally introduced folder functionality.

The feature is pretty cool. When editing a Doc within the context of a group, you can select an existing folder, or create a new one, in which the Doc should appear. Folders can be nested arbitrarily. Breadcrumbs at the top of each Doc and each directory help to orient the reader. And a powerful, AJAX-powered directory interface makes it easy to drill down through the folder hierarchy. (Folders are currently limited to groups, which simplifies the question of where a given folder “lives”. An experimental plugin allows individual users to use folders to organize their personal Docs.)

I’ve got a couple reasons for drawing attention to this release. First, the Folders feature was developed as part of contract work I did for University of Florida Health. They use WordPress and BuddyPress for some of their internal workspaces, and the improvements to BuddyPress Docs have helped them to build a platform customized to their users’ specific needs. My partnership with UF Health is a great instance of a client commissioning a feature that then gets rolled into a publicly available tool – the type of patronage that demonstrates the best parts of free software development as well as IT in the public sector.

A bonus side note: UF Health Web Services is currently hiring a full-time web developer. If you know PHP, and want a chance to work with cool people on cool projects – including WordPress and BuddyPress – check out the job listing.

The other fun thing about this release is that it’s the first major release of Docs where I’ve worked closely with David Cavins, master luthier and BuddyPress maven. He’s a longtime contributor to Docs, and has done huge amounts of excellent work to bring 1.9.0 to fruition. Many thanks to David for his work on the release!

2015

Previously: 2014, 2013, 2012, 2011, 2010, 2009.

I wrote one year ago that 2015 would be a hard year. And so it was. Here’s the requisite Dec 31 braindump.

In January, I became a dad again. Seeing my two kids grow together and become friends has been one of the privileges of my life. But the logistics of having two kids is pretty different (and much more exhausting) than when you’ve got just one child. The process of finding balance is ongoing.

The other big event of the year is that, in July, our family moved from New York City to Chicago. Moving sucks. It’s expensive, it’s disorienting, it’s inconvenient. My possessions were in limbo with the moving company for something like 13 days. Practicalities aside, it’s hard to leave NYC. While I grew up in the Midwest, I spent my entire adult life in New York and feel like a New Yorker. There’s something about New York that features more prominently in its residents’ inner ideas about who they are than when you live in, say, Ohio. In the same way as when I left graduate school, I’ve had to face this miniature identity crisis by reevaluating those aspects of my former life that are actually (ie, not just conventionally) central to what makes me tick, and then find a way to fit them in the context of my new life. This project is also ongoing 🙂

Partly in response to my man-without-a-country malaise, and partly out of philosophical motivations, I poured myself into free software contribution in 2015. More than 50% of my working year was spent doing unpaid work on WordPress, BuddyPress, and related projects. (More details.) I’m a vocal proponent for structuring your work life in such a way that it subsidizes passion projects, though numbers like these make me wonder whether there’s a limit to how far this principle can be pushed. I guess I’ll continue to test these boundaries in 2016.

One of the things I’d like to do in 2016, as regards work balance, is to find more ways to work with cool people. I am a proud lone wolf, but sometimes I feel like there’s a big disconnect between my highly social free software work and my fairly solitary consulting work.

Happy new year!

WordCamp NYC talk on the history of the WordPress taxonomy component

At WordCamp NYC 2015, I was pleased to present on the history of the WordPress taxonomy component. Of all the WordCamp talks I’ve given, this one was the most fun to prepare. I spent days reading through old Trac tickets, the wp-hackers archives, and interview transcripts. The jokes are mostly mediocre and the Photoshopping is (mostly intentionally) lousy, but I think the talk turned out OK. Check it out below, or on wordpress.tv.

How to cherry-pick comments using Subversion (for WordPress at least)

I generally use git-svn for my work on WordPress and related projects. On occasion, I’m forced to touch svn directly. This occurs most often when merging commits from trunk to a stable branch: it’s best to do this in a way that preserves svn:mergeinfo, and git-svn doesn’t do it properly. Nearly every time I have to do these merges, I have to relearn the process. Here’s a reminder for Future Me.

- Commit as you normally do to trunk. Make note of the revision number. (Say, 36040.)

- Create a new svn checkout of the branch, or

svn upyour existing one. Eg:$ svn co https://develop.svn.wordpress.org/branches/4.4 /path/to/wp44/ - From within the branch checkout:

$ svn merge -c 36040 ^/trunkIn other words: merge the specific commit (or comma-separated commits) from trunk of the same repo. - This will leave you with a dirty index (or whatever svn calls it). Use

svn statusto verify. Run unit tests, etc. $ svn ci. Copy the trunk commit message to EDITOR, and add a line along the lines of “Merges [36040] to the 4.4 branch”- Drink 11 beers.

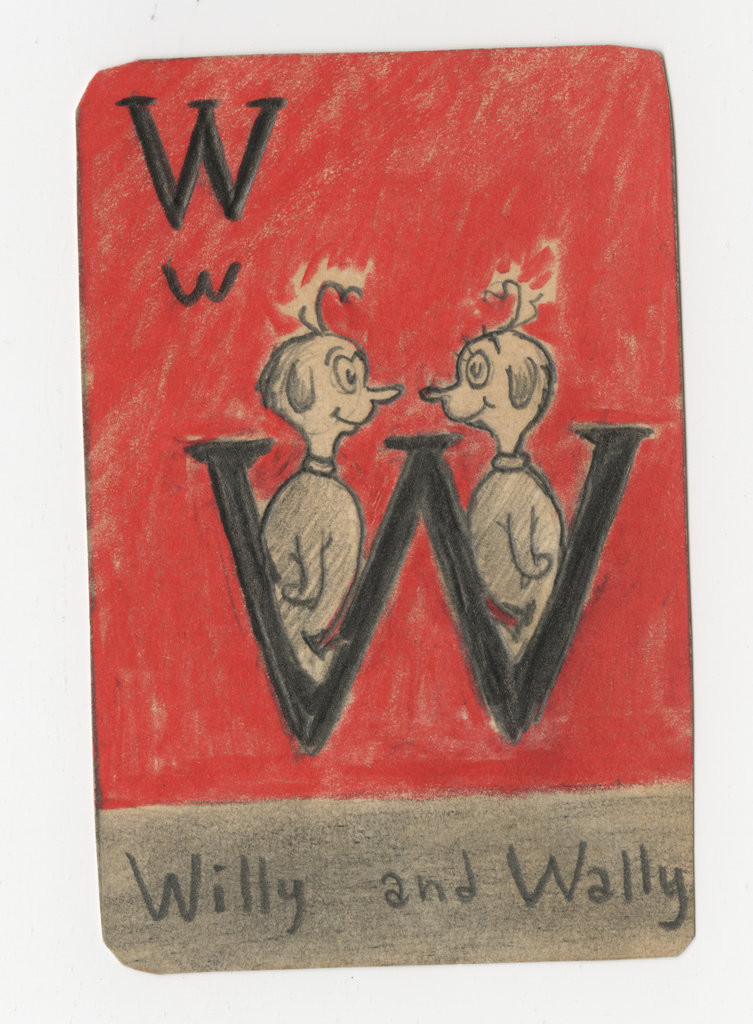

Willa and Wally

My kids are named Wilhelmina and Walter. So when I saw this image in a New York Times article about items recently unearthed in Dr Seuss’s filing cabinets, I swooned:

I sent a link to my wife, and told her I wished I could get it in poster size.

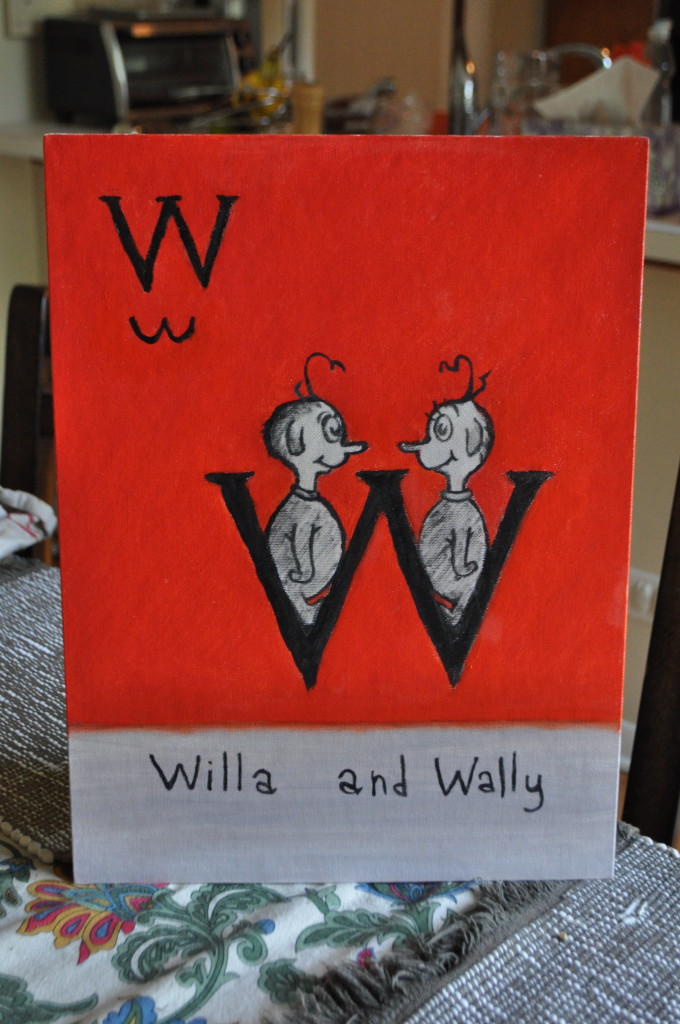

Yesterday, I got an early birthday present – my wife had sent the link to my mother, who painted me a slightly modified version:

Thanks, Mom and Rebecca 🙂

Very French Trip podcast

As part of my ongoing project to engage with the global WordPress community while simultaneously humbling myself, I’ve agreed to appear on the Very French Trip podcast on September 10, where I’ll be talking about how WordPress and BuddyPress get built. If you’re a French speaker, or if you like to hear me stumble, tune in for a rollicking listen.

Et si vous êtes francophone, je vous invite de poser des questions à l’avance du podcast. Vous pouvez les laisser aux commentaires de l’annonce VFT, ou sur Twitter en utilisant le hashtag #VFTPodcast. On se verra bientôt 😉

A New York City farewell eating tour

Posting this mostly for my own records.

Next week, I’m moving away from New York. Starting with a pizza tour a few weeks ago, I’m trying to cram in some quality NYC meals in my last stretch as a New Yorker. Some of these are classic places, some are on the list for sentimental reasons. Here’s a summary of where I’ve been in the past few weeks, along with a few places slotted for my remaining 10 days:

First, the pizza joints:

Etc:

- Bagels: have been hitting my local, but would like to get a last trip to The Bagel Hole

- Court Pastry Shop, for the spumoni and maybe a lobster tail

- White sauce hot sauce – probably won’t make it to my favorite Halal cart (near Queens College), but trying to patronize all my neighborhood stands

- Some quality pastrami – probably Pastrami Queen, which is near my place and is ridiculous

- A last slice of the weirdly delicious cheesecake at my favorite diner

Custom version-based cache busting for WordPress CSS/JS assets

WordPress appends a query var like ?ver=4.2.1 to CSS/JS URIs when building <link> and <script> tags. When WP’s version changes (eg, from 4.2.1 to 4.2.2), the query var changes too, causing browsers to bypass their cached assets and forcing a fresh version to be requested from the server. This works well for core assets, because they generally don’t change between WP releases. But for assets loaded by plugins and themes – which don’t always provide their own ver string when enqueuing their scripts and styles – it’s not a perfect system, since browser caches will only be busted when WordPress core is updated.

I built a small filter for the CUNY Academic Commons that works around the problem by appending our custom CAC_VERSION string to all ver query vars. This ensures that users will get fresh assets after each CAC release, whether or not that release includes a WP update.

Note that this code is pretty aggressive: it busts all CSS/JS caches on every release. You could use the $handle argument to do something more fine-grained. Note also that this technique doesn’t work equally well for all caching setups; see this post for an alternative strategy, based only on filename. Proceed at your own risk.